Cloned Code

Surely most developers have been in this situation on one or more occasions: You take over a huge code base. Now you are the one responsible for its maintenance and development. Lots and lots of code, and initially almost impossible to understand. Of course code comments and supporting documentation is minimal or even absent. Soon you also realize that a lot of duplicated code exists.

The previous developers must have thought they were smart by copying-pasting code instead of creating new common functions that could be called from several places. Apparently they were not aware of the DRY principle!

This behavior of course creates a lot of problems for the future. Like a larger code mass to handle to start with. Modifying code in one place forces one to find and correct all other places with similar or equivalent code. Missing one of those places can have major consequences.

So finding and refactor these occurrences of cloned code could improve the code base and make it easier to work with. But how to do that?

How to find and manage cloned code, or duplicate code is actually a science of its own. Those working with the cloned code problem write papers and doctorate on the subject, or go to conferences.

In Pascal Analyzer we have taken a pragmatic approach to the problem with cloned code. In order to find cloned code, our solution builds on capabilities that we already have, like a robust lexer and parser.

Consequently there is a new report in Pascal Analyzer to locate cloned code. It compares code and suggest places where it is most likely that code has been cloned.

There are currently two sections in this report:

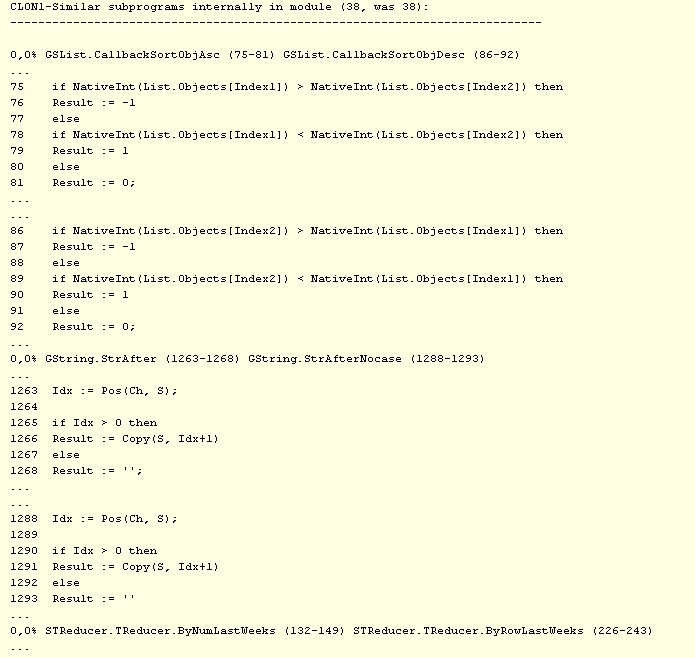

- CLON1: Similar subprograms internally in module

- CLON2: Similar subprograms over all modules

Both these report sections analyze and compare code on a function (subprogram) level. They display code that is same or almost same in a pair of functions. Results are presented as percent values (sum of edit-distances divided by number of tokens), so gives a measurement for how similar they are.

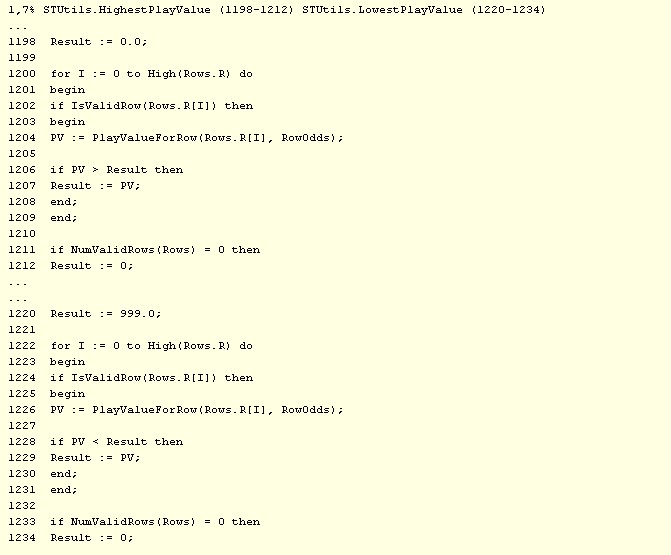

In the examples above, the results show 0,0%, which means that these subprograms are considered equal. For differing subprograms, the percent value will increase. Here is an example of two subprograms that are almost similar, so their percent value is 1.7 in this case:

You can then use these results to do some clever refactoring, like removing duplicated code by moving it to common functions. As always, you should not consider the reports like some kind of absolute truth, but more like hints for where you can improve.

How does it work? Well, Pascal Analyzer tokenizes the code and then compares different functions and statements to determine how similar they are to each other. It does this in a smart way.

For example, how identifiers are named has no effect. Code that is identical apart from identifiers names will produce a perfect match. Even so whitespace and format style will have as small impact as possible.

When code is compared, Pascal Analyzer uses an algorithm known as edit-distance. It detects how many changes are needed to convert a piece of code to another one. The less changes needed, more likely is it that code has been copied.

We also have a set of report sections that compare code on a code block level instead of on a function level. But this is a whole lot more time-consuming, so these reports are not yet included in the application. Hopefully they will be released at a later time.

If you are not yet a user of Pascal Analyzer, and want to try this report, you can download the evaluation version. It will however only show a small fraction of all results, but you could still get a feeling for how these and other reports work.